Post date:

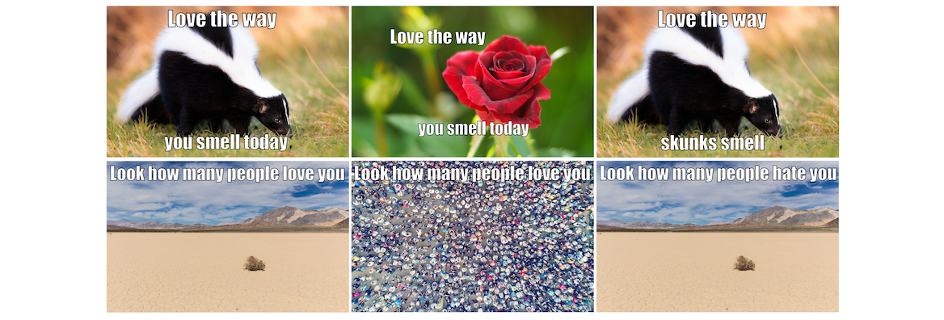

The competition asked teams to devise algorithms that are able to understand whether a meme – consisting of an image and overlaid short text – is hateful or benign.

The team was led by School of Engineering PhD student Grzegorz Jacenków, and included Professor Sotirios Tsaftaris, the School’s Chair in Machine Learning and Computer Vision, and colleagues from the Alan Turing Institute, University of Warwick, University of Birmingham, Queen Mary University of London, the University of Oxford, and Canon Medical Research Europe.

Rise in online hate

The explosive growth of social media has been transformative in allowing people to quickly share ideas and content, but this same ease of communication has also led to a rise in ‘online hate’ which can often target vulnerable groups and communities, toxify public discourse and exacerbate social tensions.

Pressure is growing on major platforms to remove such content, but the sheer volume of online posts makes manual moderation of social media conversations near impossible, necessitating the use of automated tools.

An AI challenge

While technology has evolved rapidly to counter online toxicity expressed either as text or images, automated hate detection systems are less effective when toxicity arises from the combination of an image and text.

Such “memes”, which use a nuanced interaction between images and text to cause offence, remain particularly difficult to detect using the latest artificial intelligence (AI) algorithms, which are often trained to identify just on modality – for example just text, or just images.

In response, the team developed a new, more sophisticated algorithm capable of detecting hateful content on the basis of a more complex interplay between both image and text inputs to more accurately understand different combinations of these factors.

Pushing the boundaries of algorithms

Grzegorz Jacenków, who is studying for PhD in machine learning and medical imaging in the School’s Institute of Digital Communications, said:

Acknowledgements

The competition team were:

- Grzegorz Jacenków, PhD Candidate and Team Lead, University of Edinburgh

- Professor Sotirios Tsaftaris, Chair in Machine Learning and Computer Vision and Turing Fellow, University of Edinburgh

- Dr Alison O’Neil, Senior AI Scientist, Canon Medical Research Europe

- Professor Maria Liakata, AI Fellow, Queen Mary University of London and The Alan Turing Institute

- Professor Helen Margetts, Programme Director for Public Policy and Turing Fellow, University of Oxford and The Alan Turing Institute

- Dr Bertie Vidgen, Research Fellow in Online Harms, The Alan Turing Institute

- Dr Elena Kochkina, Postdoctoral Researcher, University of Warwick and The Alan Turing Institute

- Dr Harish Tayyar Madabushi, Lecturer, The University of Birmingham

The team was generously supported with Azure server credits by the Turing Institute and given access to JADE, the largest graphics processing unit (GPU) facility in the UK, which is funded by the Engineering and Physical Sciences Research Council (EPSRC) for world-leading research in machine learning.

Further Information

- Driven Data Hateful Memes Challenge

- The Hateful Memes Challenge: Detecting Hate Speech in Multimodal Memes

- Grzegorz Jacenków profile, School of Engineering, University of Edinburgh

- Institute for Digital Communications, School of Engineering, University of Edinburgh

- VIOS: a collaboration involving several of the competition team, using artificial intelligence, computer vision and inverse problems, to address societal problems by solving key challenges in the life and natural sciences.